Your Click Isn’t Free: Inside the Massive Energy Drain of AI

Behind every click, stream, or AI prompt sits a sprawling network of data centers—out of sight, but pulling in enormous amounts of electricity and water to keep the digital world humming. And the appetite is growing fast. Global data center energy use is projected to jump from about 448 terawatt-hours (TWh) in 2025 to nearly 980 TWh by 2030, fueled largely by the industrial-scale expansion of AI. By then, AI-optimized servers alone could account for roughly 44% of total data center power demand.

That surge is putting real pressure on infrastructure—especially resources most people never associate with the internet. Water is a big one. A typical mid-sized facility can use as much water as a small town, while the largest operations burn through up to 5 million gallons a day—about what a city of 50,000 would need. Most of that goes toward cooling systems, which are essential to keep high-powered computing equipment from overheating.

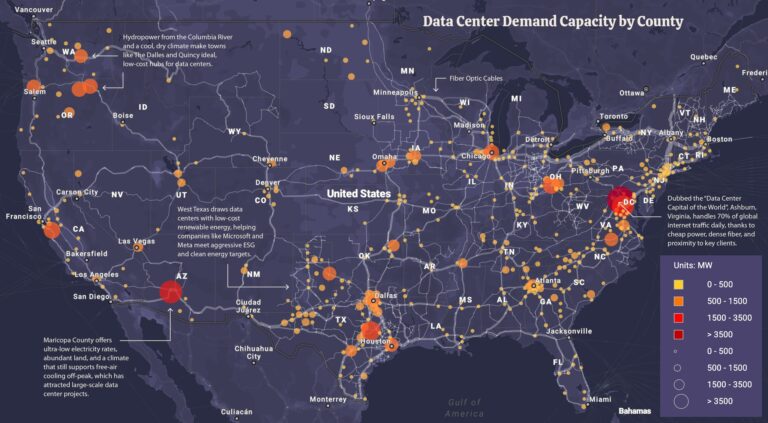

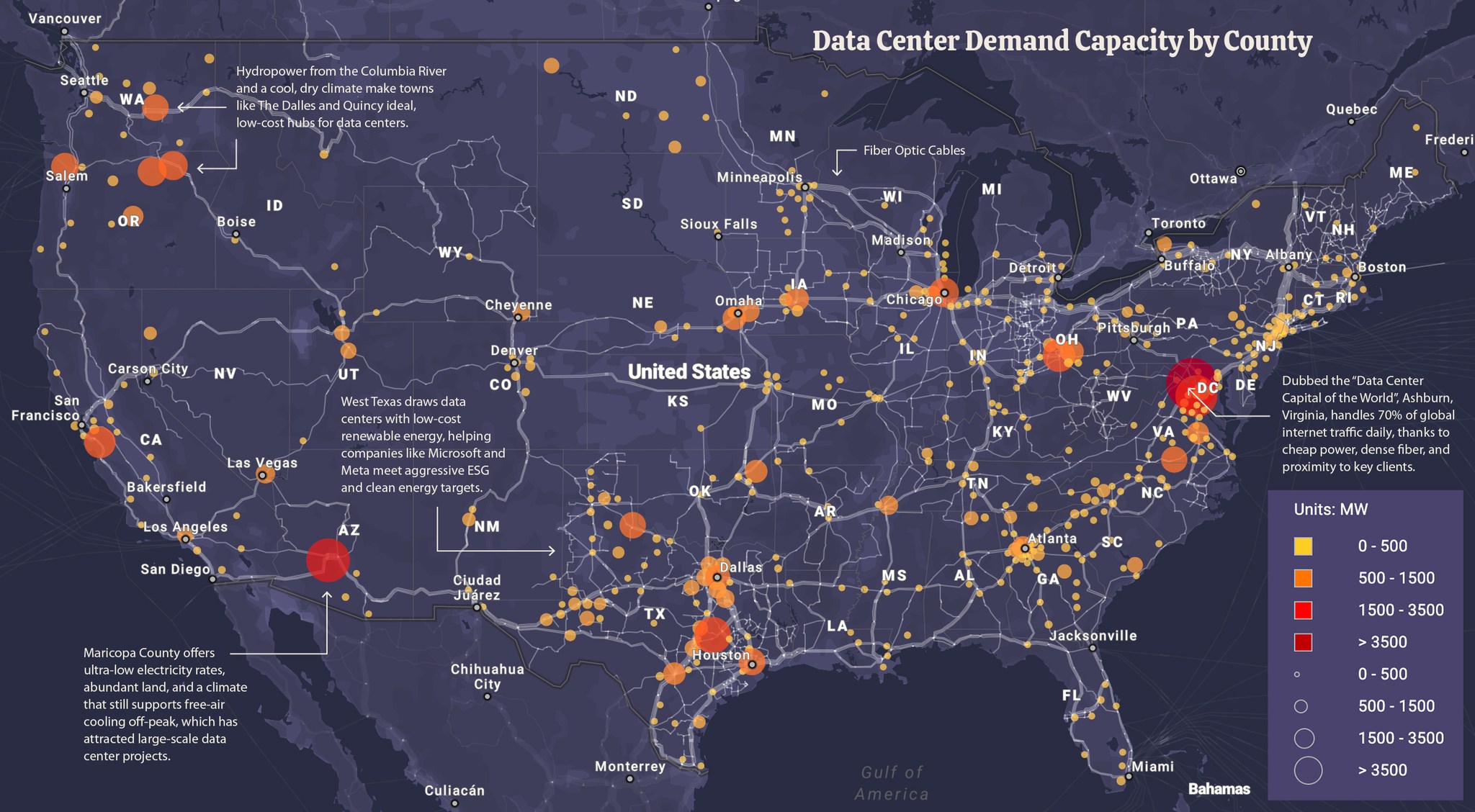

Where these centers get built has less to do with population and more to do with logistics and economics. The big hubs—Northern Virginia, Texas, California, Western Europe, and parts of East Asia—share a few key advantages:

- Access to cheap, reliable electricity

- Ample water supply for cooling

- Close proximity to major fiber routes and subsea cables

- Business-friendly zoning and tax incentives

Northern Virginia stands in a league of its own, handling an estimated 70% of global internet traffic. That dominance comes from a perfect storm of low-cost power, dense fiber infrastructure, and its proximity to federal agencies and major contractors in the Washington, D.C. area. The digital backbone of institutions like the Pentagon and intelligence agencies often runs through server farms just across the Virginia line.

A major driver of this explosion is AI training, which requires thousands of high-performance chips running nonstop for weeks or even months. But interestingly, most of the ongoing energy demand isn’t from training—it’s from usage. According to MIT Technology Review, AI inference—when a trained model processes new inputs—accounts for roughly 80–90% of AI-related computing power. To put that in perspective, a standard Google search uses about 0.3 watt-hours of electricity, while a query to a large language model can use closer to 2.9 watt-hours—nearly ten times as much.

Meanwhile, the tech giants behind this boom—Google, Microsoft, Amazon, and Meta—have all made high-profile pledges to reach carbon neutrality. But reality hasn’t exactly cooperated. Since those commitments around 2019–2020, emissions have moved in the opposite direction: Google’s up nearly 50%, Meta’s over 60%, Amazon’s about 33%, and Microsoft’s more than 23%, according to recent reporting.

In short, the digital world may feel weightless—but the infrastructure behind it is anything but.